Imperative Programming with Poly

Category theory has been applied fruitfully to functional programming for decades, leading some to think that functional programming represents the one true way of doing math on a computer. However, the stubborn fact that the internals of computers and the world itself are both stateful means that imperative programming is darn useful. And following my ACT motto of “if the practitioners are doing it, they can do it better with category theory”… comes the flow of research that lead to this blog post.

1 Introduction

In the messy, tangled family tree of programming languages, two candidates for “roots” are the lambda calculus and turing machines. The lambda calculus had a strong influence on LISP and ML and other such languages, while turing machines and their more convenient cousins register machines and stack machines had a strong influence on assembly language, C, and really any language that runs on an actual CPU. This strain of programming languages are known as “imperative” languages, and are characterized by “side-effects”, i.e. functions that don’t just return something, but have some effect in the world.

Traditionally, side-effects in functional programming are handled using monads. However, David Spivak and I are attempting a different approach.

In this post, we are going to discuss turing machines via a particular construction in Poly, and then show the general framework we have developed. Not all of the puzzle pieces are there yet in order to make this a fully-fledged theory of imperative programming, but we have to walk before we can run.

This post will assume some knowledge of Poly because it is a post about using Poly for something, not a Poly tutorial. Specifically, the reader should be familiar with polynomials, maps of polynomials, and polynomial composition.

2 Turing Machines

We start by reviewing what precisely a turing machine is. This “review” will sneakily introduce the main concepts of this blog post, so it may present the logical structure of a turing machine in a different way than is familiar.

We start by picturing a turing machine as a black box with a button on it. The button can be three colors: blue, green, and red. When the button is green, it can be pressed; when it is blue or red it cannot be pressed and we say that the turing machine has halted in an accepting (blue) or rejecting (red) state. When the button is pressed, the color can change or stay the same; when the box is left alone it does nothing.

Basically, the way the box works is that you want to answer a yes/no question, someone sets up the box to answer the question, and then you poke the button until the button turns blue or red; blue means the answer is yes and red means the answer is no.1

Mathematically, this “interface” to the black box is a polynomial p = \{{\color{green} G}\}y + \{{\color{red} R},{\color{blue} B}\}. The three positions are green, red and blue. The one direction at the green position corresponds to the ability to push the button; the lack of directions at red and blue mean that there’s no action to do. The black box is a coalgebra of p; i.e. a set S of “states” along with a function f \colon S \to p(S). This function sends each state s to a position of p (determining which color the button should be in that state) and then if that color is green, we also get a new state of the box.

Now, imagine opening up this box. Inside the box is a very long and very thin roll of paper (which we call the tape for historical reasons) with regularly spaced squares on it. Above the tape is a mechanical gripper holding a pencil, as well as a light sensor pointed at the same square as the pencil. When you push the button, you can see the pencil either make or erase a mark on the square underneath, and then the paper moves to the left or right.

Mathematically speaking, the state of the tape is given by an element of \{ \square, \blacksquare \}^\mathbb{Z} \times \mathbb{Z}; that is, a choice of empty or filled for each square (and there are \mathbb{Z} squares; I told you that the tape was long), and a choice of position on the tape.

Making this all work is a control center attached to the pencil, light sensor, roll, button, and second, smaller black box. We call this control center the handler, and the smaller black box the inner machine.

When the button is pushed, the handler checks the light sensor. Depending on the value of the light sensor, the handler pushes one of two buttons on the inner machine. The inner machine then either displays on its screen a pair left/right arrow and a filled/empty square, or simply the colors blue/red and retracts its buttons. If the inner machine displays an arrow and a square, then the handler writes or erases the current square, moves the tape in the direction of the arrow, and then displays green on the outer button. If the inner machine lights up blue or red, then the handler lights up the outer button blue or red.

More precisely, this inner machine is a coalgebra on the following polynomial. q = (\{\leftarrow,\rightarrow\} \times \{\square,\blacksquare\}) y^{\{\square,\blacksquare\}} + \{{\color{blue} B},{\color{red} R}\} The point of the “handler” is to take a coalgebra on q and produce a coalgebra on p. And this is what the rest of the post will be; we will learn how to formalize this notion of handler.

3 Handlers

Before we get into the math, I want to say a little bit about what exactly a handler is. Essentially, we can think about a polynomial coalgebra as a “state machine with side effects”. Handlers “handle” the side effects, by updating the internal state of the handler and also possibly by producing some other side-effects. And then, handlers translate the results of those side effects back into the original state machines. If you think about the definition of polynomial composition, you will see that this is precisely what is described by the following definition.

We call this an elementary handler because there is a more sophisticated definition of handler that goes between polynomial comonoids. However, we will not treat this definition in this blog post, so when we say “handler” in the remainder of this blog post, we will mean elementary handler.

We can now formalize the handler for Turing machines with this definition.

The final puzzle piece is how does one apply a handler to a coalgebra. As a coalgebra is simply a handler with codomain 0, this can be accomplished by “handler composition”.

There is essentially nothing to say about the proof of this proposition; we have constructed a polynomial map by composition of other polynomial maps and no properties need hold.

Now that we have this proposition, we can compose a handler p \blacktriangleleft\!\!\overset{m}{\sout{\,\,\phantom{m}\,\,}}\!\!\blacktriangleleft q with a q-coalgebra q \blacktriangleleft\!\!\overset{S}{\sout{\,\,\phantom{S}\,\,}}\!\!\blacktriangleleft 0, and we immediately get a p-coalgebra p \blacktriangleleft\!\!\overset{m \triangleleft S}{\sout{\,\,\phantom{m \triangleleft S}\,\,}}\!\!\blacktriangleleft 0.

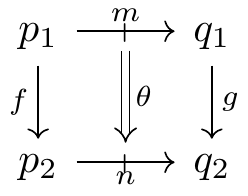

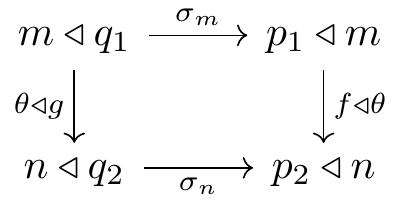

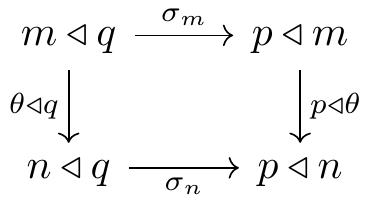

As we have a composition, it is natural to develop a category of handlers. However, as \triangleleft is only associative up to isomorphism, we must instead develop a bicategory or double category of handlers. We thus make the following definition.

The proof that this is a bicategory follows from an embedding of handlers into the bicategory of bicomodules; we will omit this here.

Following the maxim that all bicategories want to be double categories, there is also a double category of handlers.

4 Conclusion

This blog post has only scratched the surface of handlers. David and I are very interested in using this framework to model stateful, side-effecting composition; we hope to find a stateful “internal language” of this double category that will allow us to “compile” code into handlers. There is also a ton of interesting math connected to the handler framework; I presented the most basic version of handlers, but when you add comonoids to the picture the theory becomes much richer.

Additionally, I would like to say that while this formulation of handlers is original to me and David, algebraic effects system are a very active field of research within functional programming languages currently, with implementations in various stages of maturity in Haskell, Scala, and Ocaml. I am hoping that our work into handlers will understand various features like looping and mutation at a lower level than these algebraic effects, which are tied to a high-level programming language that already has recursion and advanced data structures. Our polynomial handlers can model CPUs and assembly language; my hope is build a “full-stack” abstraction that can handle compilation of higher levels into lower levels, while having the full power of Poly at every level.

Footnotes

This raises some other questions, like “how long do I have to sit here pushing this button.” It turns out that for a large class of possible box-innards there’s no way to know for sure whether the button will ever turn blue or red; this is known as the “Halting Problem.”↩︎